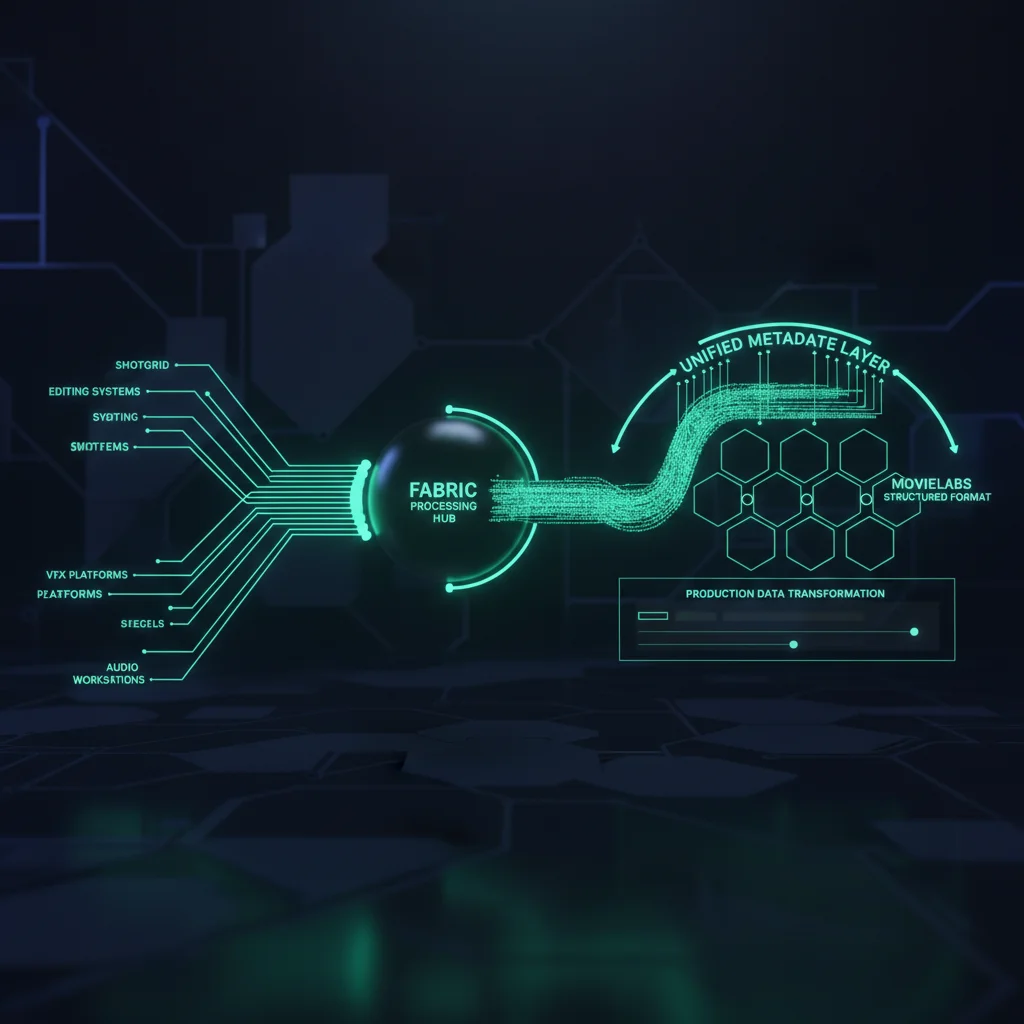

The Metadata Intelligence Layer

Productions generate chaos — thousands of files across dozens of folders with cryptic names. Fabric cracks the code. It connects to where the story lives, decodes your file paths into structured metadata, normalizes everything into MovieLabs 2030 standards, and makes every asset findable through natural-language AI search.

The Metadata Crisis in Production

Every production creates the same problem. Thousands of files. Dozens of systems. No connection between what a file IS and what it MEANS to the story.

Files Without Context

Your SAN has 50,000 files named comp_v003.exr. Without context, they're unsearchable noise. Six months after wrap, nobody knows what anything is.

Disconnected Systems

ShotGrid knows every shot, sequence, and character. Your storage knows every file path. Nothing connects the two. The story and the files live in separate worlds.

AI Can't Fix This Alone

AI can tag an image as 'person outdoors.' It can't know that the file is Shot 060 from Sequence 010, assigned to compositing, for Episode 3 of your show. That context lives in production systems.

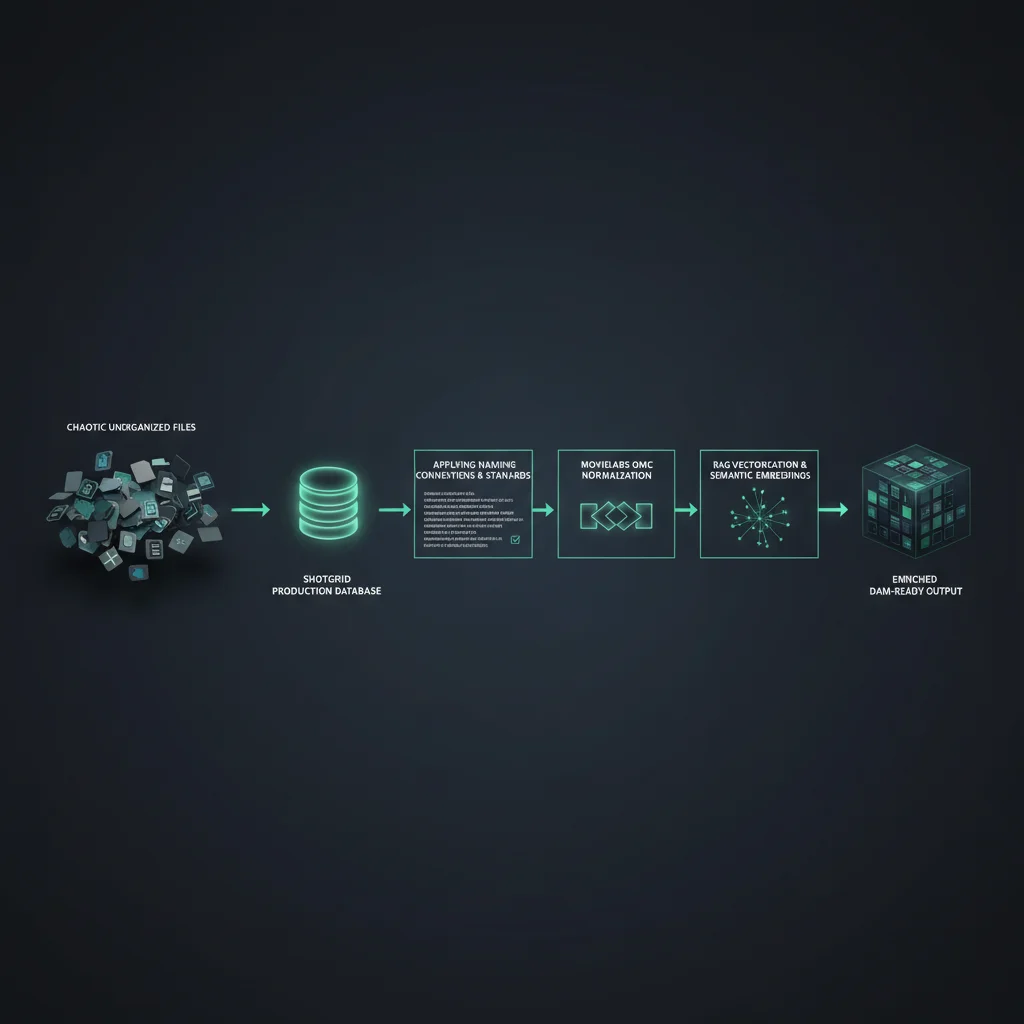

From Chaos to Context in Five Steps

Fabric doesn't just add AI to your files. It connects the systems that hold your production's story to the files themselves, then layers intelligence on top.

1. Connect to Where the Story Lives

Fabric integrates directly with ShotGrid, FileMaker, and SyncOnSet — the systems that hold your shots, sequences, characters, scenes, and episodes. Full OAuth2 authentication. Multi-connection support.

2. Define Your Naming Conventions

Tell Fabric how your production names files. The visual template builder parses paths like SHOW_SEQ010_SH060_comp_v003.exr into structured metadata using drag-and-drop segments mapped to DAM tag keys.

3. Normalize to MovieLabs 2030

Every piece of data — from ShotGrid entities to parsed file paths — is automatically transformed into MovieLabs OMC v2.6.1 ontology. 12 entity types. 10 controlled vocabularies. Industry-standard from day one.

4. Vectorize into a Searchable RAG

All normalized metadata is embedded and indexed into a vector database for semantic search. A locally-hosted language model powers conversational access — no cloud dependency.

5. Publish Enriched Metadata to DAM

Content-hash delta sync pushes only what changed. Real-time WebSocket progress. Tag key/value normalization. Your DAM receives production-contextualized, AI-enriched metadata automatically.

MovieLabs 2030: We Don't Just Talk About It

Fabric is one of the first production implementations of the MovieLabs Ontology for Media Creation (OMC v2.6.1). We didn't build a wrapper. We built a transformation engine.

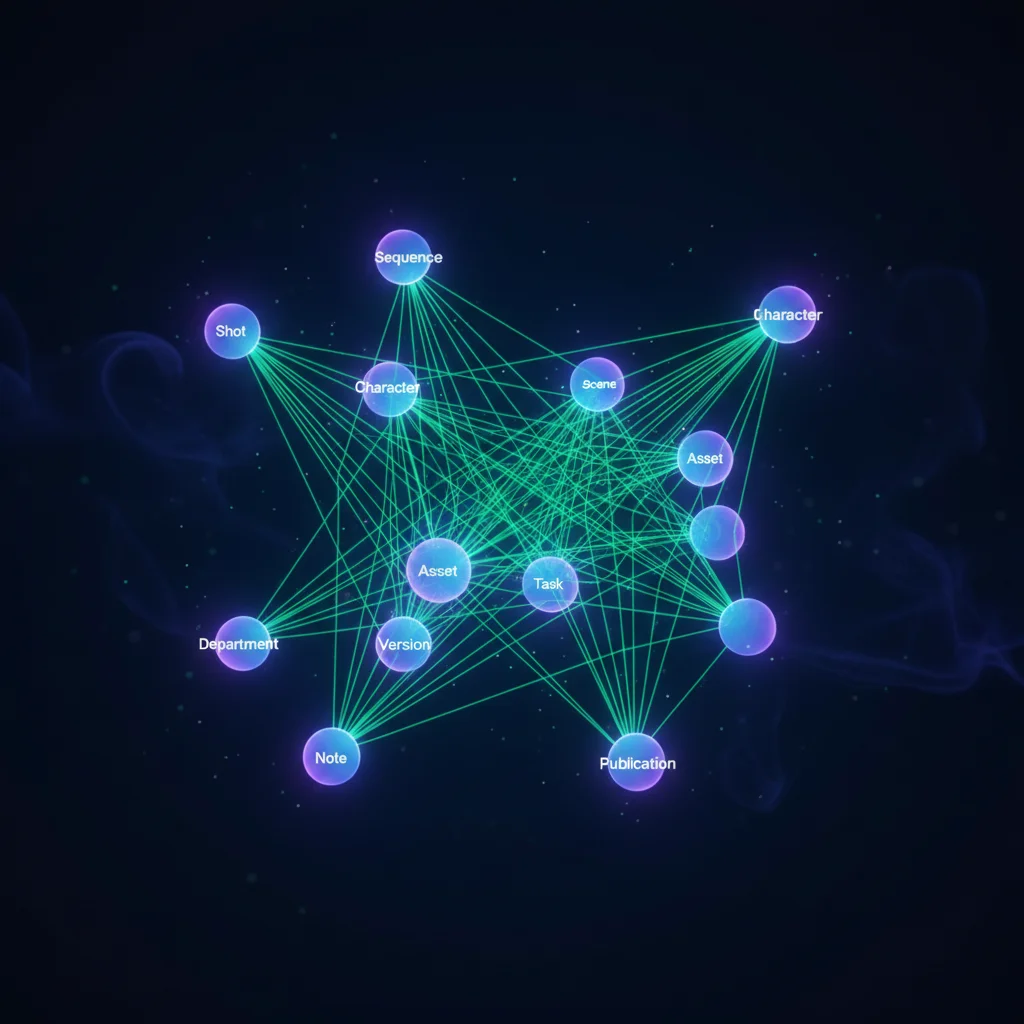

12 OMC Entity Types

Automated ShotGrid-to-OMC Transformation

When Fabric connects to ShotGrid, it doesn't just pull data — it transforms it. Every ShotGrid entity is automatically mapped to its OMC equivalent:

- → ShotGrid "Shot" → OMC Shot entity

- → ShotGrid "Sequence" → OMC Sequence entity

- → ShotGrid "Asset" → OMC Asset with Character/Prop classification

10 Controlled Vocabularies Enforced

- Asset Types (character, prop, environment, vehicle, FX...)

- Shot Status (ip, rev, apr, fin, omt, hld...)

- Department Codes (comp, anim, light, fx, model...)

- Task Types, Priority Levels, Delivery Formats...

Every value normalized. Every taxonomy consistent. Every archive queryable decades from now.

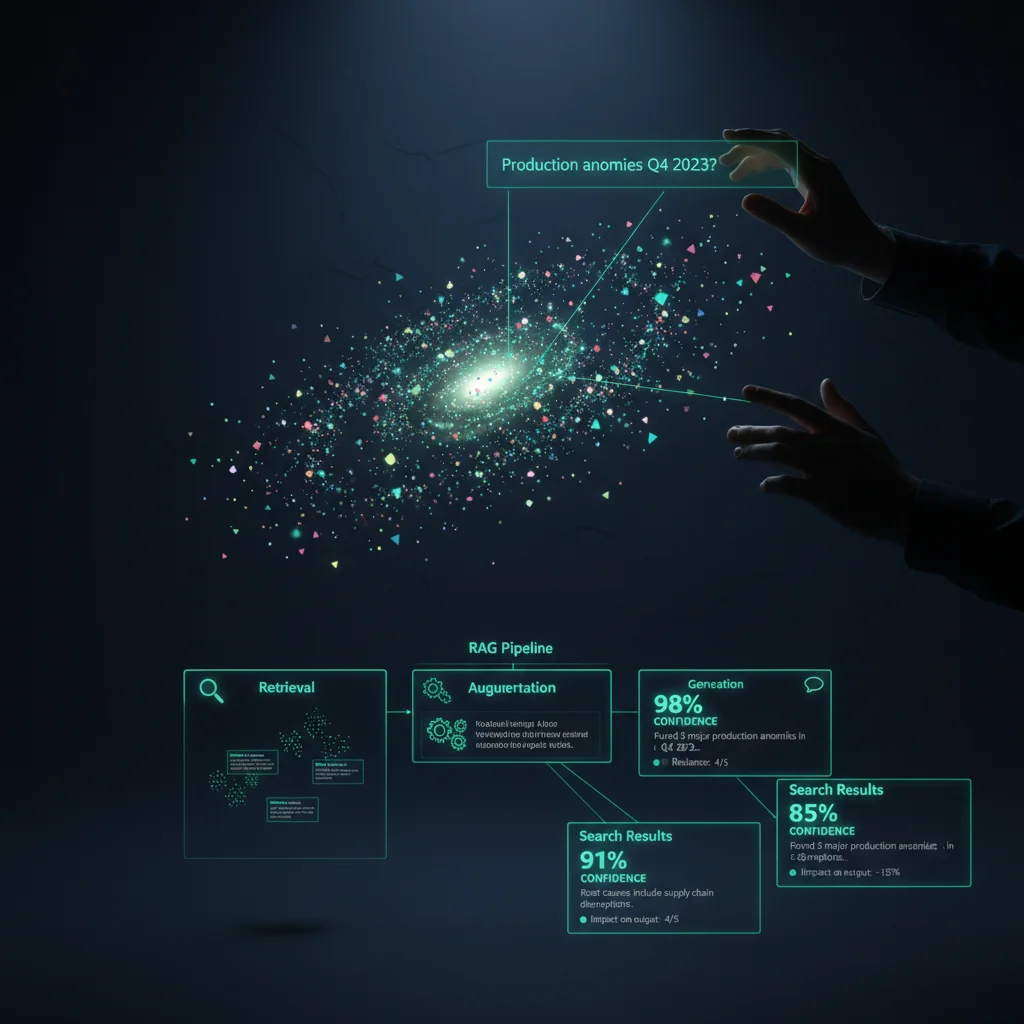

Ask Your Production Anything

Once Fabric normalizes your metadata, it becomes conversationally searchable. Natural-language queries return precise results with source citations and similarity scores.

How It Works

3-Tier Intelligent Query Routing

Listing queries (e.g., "list all assets") skip vector search entirely — direct database lookup. Filtered queries (e.g., "approved shots in episode 3") use hybrid search — structured filters + semantic matching. Semantic queries (e.g., "show me everything related to the final battle") use full RAG pipeline with E5-large-v2 embeddings.

Source Citations

Every result shows which data source contributed (ShotGrid connection, naming convention, DAM sync). Similarity scores give confidence levels. Full traceability from question to answer to source.

On-Premises AI

E5-large-v2 embeddings: self-hosted, 1024 dimensions. Llama 3.1 8B: runs locally, no data leaves your network. No cloud AI dependency. Your production data stays yours.

Decode Any File Path Into Structured Metadata

Productions already encode meaning into file names. Fabric's visual template builder captures that logic and applies it automatically to every file.

Template Definition

Applied to: DRAGON_SEQ010_SH060_comp_v003.exr

| Tag Key | Extracted Value |

|---|---|

| show | DRAGON |

| seq | SEQ010 |

| shot | SH060 |

| dept | comp |

| ver | 003 |

| ext | exr |

→ Maps to OMC: Shot SH060 in Sequence SEQ010

→ Department: Compositing | Version: 3

Drag-and-Drop Segments

Each segment of your naming convention is a draggable block mapped to a DAM tag key. The builder shows a live preview of how any file path will be parsed. Supports separators ( _ - . / ), optional segments, and regex patterns.

Connects to the Systems That Matter

Fabric doesn't ask you to change how you work. It integrates directly with the production tracking, storage, and DAM systems you already use.

ShotGrid

OAuth2 authentication, multi-connection support, field discovery with sample data preview, bidirectional field mapping, real-time entity sync

FileMaker

Production database sync, custom layout mapping, script trigger support for real-time updates

SyncOnSet

On-set continuity data, character/wardrobe/prop tracking, scene metadata from production floor

Backlot DAM (Warner Bros.)

Encrypted credential storage, tag key/value sync, push-to-DAM with content-hash delta sync, WebSocket real-time progress

Vector Search

Vector indexing, hybrid search, OMC-native schema for semantic queries

Metadata Store

Document store for OMC entities, connection configurations, naming conventions, and enrichment metadata

Custom connectors available for enterprise deployments. Fabric's connector architecture is designed for extensibility.

The Complete Enrichment Pipeline

From raw file path to production-ready metadata in seconds.

Fabric watches configured storage paths for new files

Naming convention extracts show/seq/shot/dept/version

ShotGrid/FileMaker data matched to parsed context

All data transformed to MovieLabs OMC v2.6.1

Embedding model generates high-dimensional semantic vectors

Vector database stores embeddings + structured OMC data

Delta sync pushes enriched metadata to DAM

File is now conversationally searchable

Every Production Needs This

Active Productions

Connect ShotGrid from day one. Every file ingested automatically inherits production context. No manual tagging.

Post-Production Archives

Apply naming conventions to legacy archives. Millions of files retroactively gain searchable metadata.

Studio Asset Libraries

Characters, props, environments — all classified by OMC type and searchable across every production.

Compliance & Auditing

MovieLabs 2030 compliance from ingest to archive. Every metadata transformation logged and traceable.

DAM Enrichment

Stop hand-tagging. Fabric parses paths, pulls production context, and publishes normalized tags to your DAM automatically.

Make Every File Findable

See how Fabric connects your production systems, decodes your file paths, and makes every asset in your pipeline searchable.

Frequently Asked Questions

Fabric currently integrates with ShotGrid (Autodesk Flow Production Tracking) via full OAuth2, FileMaker, and SyncOnSet. Custom connectors are available for enterprise deployments. DAM publishing is supported for Warner Bros. Backlot with additional DAM targets in development.

No. Fabric runs E5-large-v2 embeddings and Llama 3.1 8B entirely on-premises. No production data is sent to external AI services. This is by design — studios require full data sovereignty.

The Ontology for Media Creation (OMC) is the industry standard data model defined by MovieLabs for the 2030 Vision. It defines how production entities (shots, sequences, characters, assets) should be described and related. Adopting OMC means your metadata is future-proof, interoperable, and queryable by any OMC-compliant system.

You define a template that describes your file naming pattern using a visual drag-and-drop builder. Each segment (show code, sequence, shot number, department, version) is mapped to a DAM tag key. When files are ingested, Fabric parses the path against your template and automatically extracts structured metadata.

Yes. Fabric uses vector indexing for semantic search and a document store for metadata storage. The architecture is designed for production-scale workloads. The 3-tier query routing system ensures that simple listing queries remain fast even at scale.

AI tagging identifies what an image looks like ("person," "outdoor," "blue"). Fabric identifies what a file MEANS to the production ("Shot 060 in the Dragon Chase sequence, compositing department, version 3, assigned to Artist X, status: approved"). This production context comes from integrating with ShotGrid and other production systems — something AI vision models cannot do.

No. Frame.io (review/approval) and Signiant (file transfer) are potential Fabric clients, not competitors. Fabric enriches metadata at a layer these tools do not operate in.